From 0% to 97% AI:

Is Turnitin Broken?

As a Master's student and a professional tutor, I trust tools like Turnitin to act as fair gatekeepers. But what happens when the gatekeeper suddenly becomes unreliable?

Today, I encountered something that shook my confidence in one of the most widely used AI detection tools in academia. It’s a story in two pictures, showing how a single document went from "Clean" to "Flagged" in just six days.

The Evidence

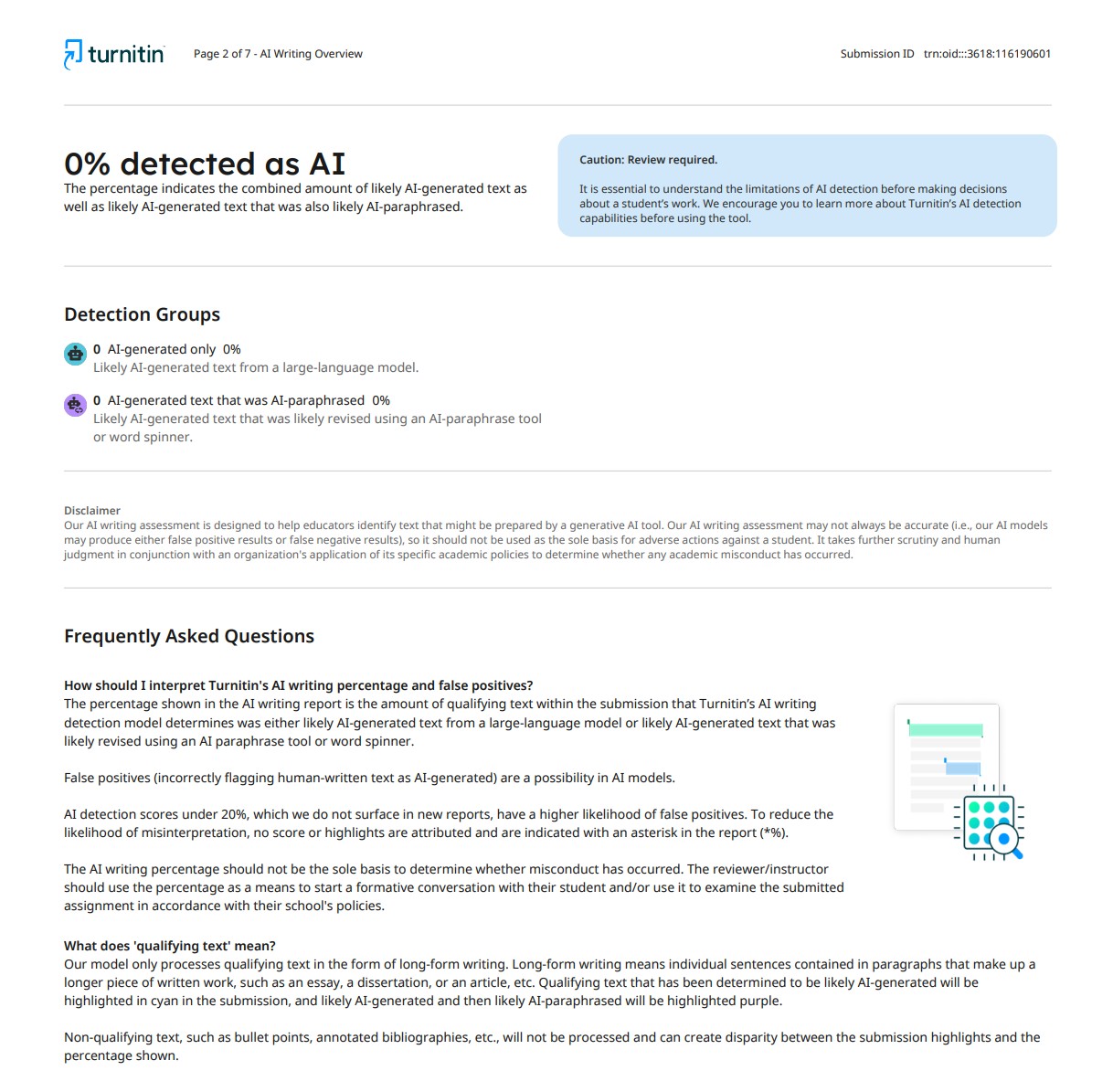

Scan 1: October 10, 2025

Baseline Result

A clean bill of health. This is the baseline we all aim for with original work.

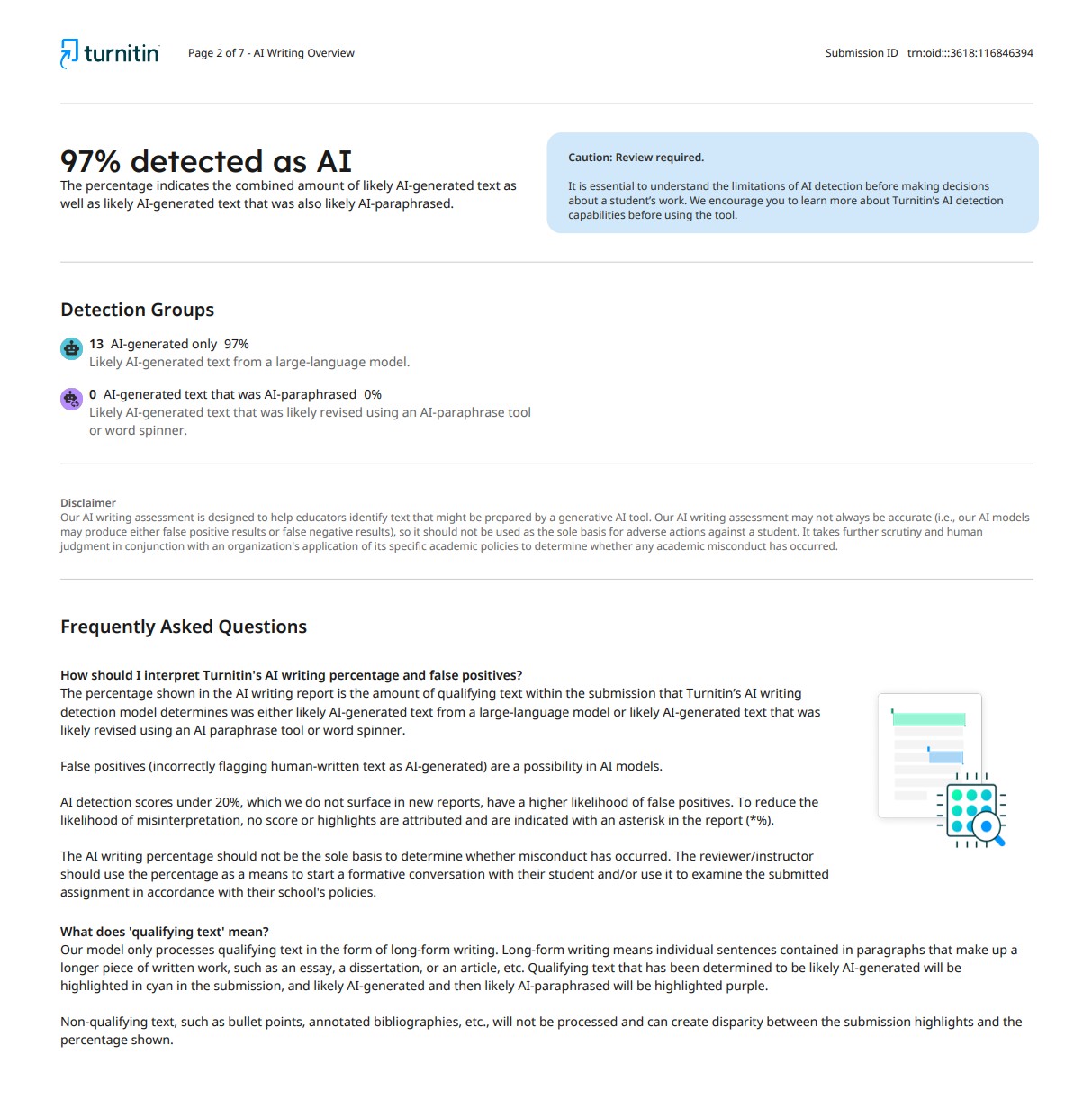

Scan 2: October 16, 2025

Re-upload (Same Document)

Six days later, without changing a single word, the score reversed completely.

My jaw dropped. How could a document go from 0% to a staggering 97% AI-generated in less than a week? This drastic change suggests a backend update that has made the model aggressively prone to "false positives."

The Human Cost

For Students

Imagine being a diligent student who spends weeks on a paper, only to be flagged as a cheater. An accusation of academic dishonesty, even if false, can have severe consequences on a student's career and mental well-being.

For Educators

How can we confidently use a tool that produces such inconsistent results? It erodes our trust and forces us to second-guess every report, potentially leading to wrongful accusations.

This isn't just a technical glitch; it's a critical issue that strikes at the heart of academic trust. I'm sharing this as a cautionary tale.

We need to be more critical of the tools we use. An AI detection score cannot be blindly accepted as gospel; it is just one piece of a much larger puzzle.

Have you experienced something similar? Let's hold these tools accountable.